To Manusha Rao

LightGBM has gained popularity recently because it is faster than other gradient boosting algorithms. This article describes how to use the LightGBM algorithm to predict the daily movement of his NIFTY50.

What is LightGBM?

LightGBM stands for Light Gradient Boosting Machine. LightGBM is tree-based machine learning technology. It is considered to be fast compared to other similar algorithms.

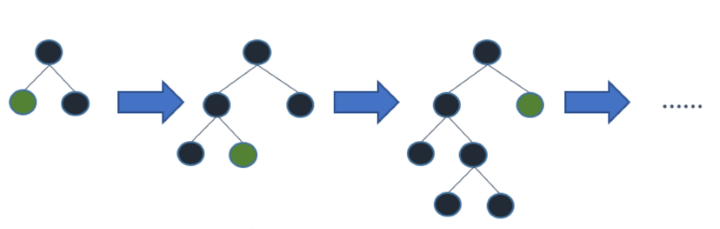

With so many tree-based algorithms already out there, you might be wondering what the significance of LightGBM is. The difference in this algorithm is that the tree grows vertically (leaf by leaf), while other tree-based algorithms grow horizontally (level by level), as shown in the image below.

Working principle of LightGBM

LightGBM is based on two main principles that set it apart from all gradient boosting algorithms.

Gradient one-sided sampling (GOSS)

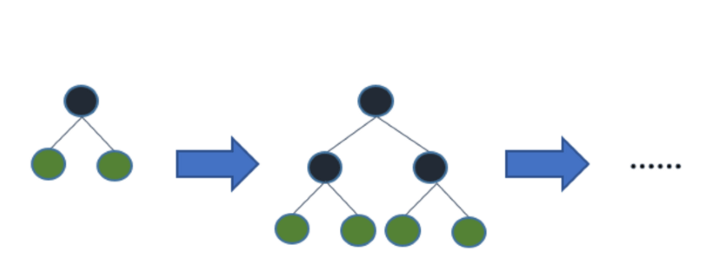

This technique focuses attention on samples with higher slopes (ie, more loss). Let’s dig a little deeper into this concept.

Consider the base model used to train 500 samples. After training I get 500 errors {X1,X2,X3,……X500}. GOSS now sorts these errors in descending order. That is, they are sorted from highest to lowest absolute error value.

Then the top a% instances with the highest gradient are retained, and the remaining (1-a)% instances with the lowest gradient, i.e. b%, are randomly selected. This gives more weight to weak learners.

Exclusive Feature Bundle (EFB)

Machine learning models typically have many features. What if you could reduce the number of features and increase speed? LightGBM offers just that. Let’s split the EFB into two parts.

1). Bundling similar functionality together – Use weighted graphs to summarize features

2). Feature integration – After bundling, merge functions that cannot take zero values at the same time.

Let’s understand this with a simple example.

Consider a machine learning model that attempts to assess an investor’s risk aversion based on a large number of features (inputs). Consider two characteristics of him: “male” and “female”. These features cannot take zero values at the same time, so you can merge them into one feature. As a result, two functions have been reduced to one.

|

Man |

Woman |

merged bundle |

|

0 |

1 |

1 |

|

1 |

0 |

2 |

Why is LightGBM so popular?

Some of the reasons why the LightGBM algorithm has received so much attention are:

Handle large datasets

LightGBM is known to handle large datasets better than XGBoost.

faster training speed

As evident from the code snippet below, LGBM is 4x faster than XGBoost.

output:

CPU times: total: 578 ms Wall time: 138 ms LGBMClassifier(learning_rate=0.01, max_depth=5, n_estimators=200, num_leaves=80)

output:

CPU times: total: 2.44 s Wall time: 461 ms XGBClassifier(base_score=0.5, booster="gbtree", callbacks=None, colsample_bylevel=1, colsample_bynode=1, colsample_bytree=1, early_stopping_rounds=None, enable_categorical=False, eval_metric=None, feature_types=None, gamma=0, gpu_id=-1, grow_policy='depthwise', importance_type=None, learning_rate=0.01, max_depth=5, n_estimators=200, num_leaves=80)

less memory usage

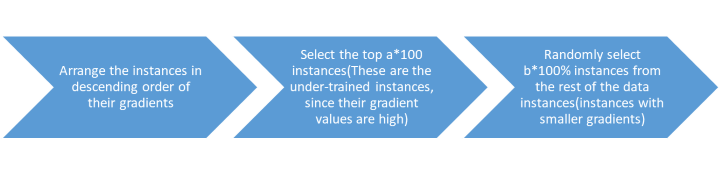

LightGBM achieves this through a technique called binning. So what is binning? Binning is the process of transforming a continuous variable/feature (such as a stock price) into a discrete set of bins. Consider his NIFTY50 adjusted closing data from December 1, 2021 to November 30, 2022 (that is, about 252 trading days).

There are 252 data points (adjusted closing prices), which would require a significant amount of memory. A binning technique was demonstrated using Excel to reduce the memory used. Here the prices are broken down into 8 different bins (X-axis). The Y-axis represents the number of values in each bin.

Counts are shown at the top of each bin. This way, the 252 data points are sorted into 8 bins, thus reducing memory usage.

Supports GPU training

GPUs support parallel processing. This type of processing requires dividing important and complex problems into smaller tasks that can be processed simultaneously.

Easy to encode categorical features

One-hot encoding is the first thing that comes to mind when talking about categorical value representations. However, LightGBM uses a more efficient and faster algorithm to automatically process integer-encoded categorical values before training. This algorithm is based on a method adapted from the article “On Grouping for Maximum Homogeneity” ⁽¹⁾ Fisher (1958).

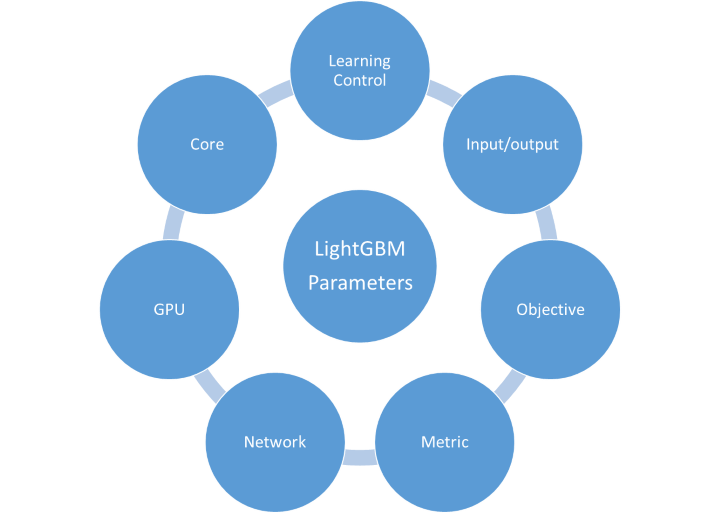

LightGBM parameter

According to the official documentation, over 100 parameters are listed. They are categorized under various headings as shown below.

However, we will only look at a few important parameters.

|

parameter |

Explanation |

Default |

|

boost type |

Specifies the type of boosting method. One of gbdt, dart, goss. |

gbdt |

|

max_depth |

Specifies the number of levels each trained tree can have. Very high values can lead to overfitting and increase training time. |

-1 |

|

num_leaves |

Specifies the maximum number of leaves each tree can have |

31 |

|

Min_data_in_leaf |

specifies the minimum number of data/samples per leaf |

20 |

|

feature_fraction |

Specify the number of features (as a percentage of columns). For example, with a value of 0.7, 70% of the features are used to train each tree.Avoid overfitting and improve training speed |

1.0 |

|

bagging_fraction |

Specifies the number of samples (percentage of rows) to use to build one tree.improve training speed |

1.0. |

For a more comprehensive list, LightGBM official documentation.

Implementation in Python

The code here predicts the direction (1 or -1) of the NIFTY50 price movement for the next day based on a set of input features. The model uses his 20 years of historical data.

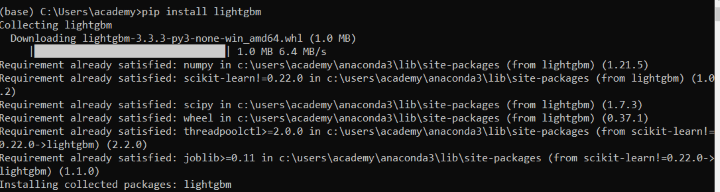

Install LightGBM

First, install the lightGBM package into your Anaconda environment.

There are two ways to do this:

Using Anaconda Prompt

To open the Anaconda prompt (Windows),[スタート], search for Anaconda Prompt, and click to open. Then just enter a line of code such as “pip install lightgbm”. The screenshot below shows the installation.

Using Jupyter notebooks

In a jupyter notebook, you can install the lightgbm package using the following line of code “!pip install lightgbm”.

!pip install lightgbm

import the required libraries

download data

We use 15 years of daily NIFTY50 data downloaded from Yahoo Finance using the yfinance module.Remove the “Close” column from the downloaded data and rename the “Adj Close” column to “AdjClose” as we are only interested in the adjusted closing price

Calculate daily changes in technical indicators and prices

In this step, we will calculate three indicators which are the input features of the LightGBM model: Exponential Moving Average (EMA), RSI and Bollinger Bands.

Next, calculate the daily change in price, which will be the target variable (predicted by the model). Calculated as follows:

- If tomorrow’s close > today’s close = 1

- if tomorrow’s close < today's close = -1

Define input features and outputs to predict

All supervised machine learning models need to define input features and target variables.

In this case the input features (open, high, low, close, ema, rsi, upper_band, lower_band and middle_band) are stored in X and the target variable is stored in Y(change).

Split the data into training and test sets

Next, split the data into training and testing datasets (80% – training, 20% – testing).

Create a LightGBM model

Initialize the LightGBM model using the LGBMClassifier method with the parameters defined in the code below. Fit the model on 80% of the data and make predictions on the remaining 20% of the data.

Accuracy result

output:

Training accuracy: 0.52 Testing accuracy: 0.55

You have now trained your model to make predictions. Let’s examine its accuracy while making predictions. As above, the model accuracy is 55%.

Can this model be used for prediction? Let’s get into the details. To better understand the predictability of the model, we will look at the confusion matrix. Since there is little difference between training and testing accuracy, overfitting cases can be ruled out.

Plot confusion matrix for training and test data

output:

Although the model is only 55% accurate, we can still use the model. If you look closely at the confusion matrix, whenever the model predicts ‘1’ it is always true (yellow square). Therefore, this model is useful for backtesting long-only classification strategies.

Importance of function

lgb.plot_importance(model)

output:

The top plot shows the importance of each input feature used in the model. This gives you better insight into your model and helps with dimensionality reduction. For example, some features such as ‘middle_band’ and ‘low’ have poor predictive power and can be excluded.

Conclusion

Well that was a lot of information to digest! Let’s summarize.

In this blog, we have understood the basic working principle of LightGBM and how it differs from other gradient boosting algorithms. We also confirmed that LightGBM is actually faster than its XGboost counterpart. Finally, we implemented LightGBM on his NIFTY50 index and speculated that the developed model can be used for long-only forecasting.

If you want to step into the field of machine learning and learn more about various machine learning algorithms and their Python implementations. In that case, Machine Learning and Deep Learning in Financial Markets Course.

Disclaimer: All investments and trading in the stock market involve risk. Any decision to trade in the financial markets, including trading in stocks, options, or other financial instruments, should be made only after thorough investigation, including personal risk and financial assessment, and the involvement of professional assistance. It’s a personal decision to be made. I think it is necessary. Any trading strategies or related information provided in this article are for informational purposes only.